AB Experiments (Firebase)

Run AB Experiments with Firebase, the LiveOps solution from Google.

Introduction

This document provides instructions for setting up the AB Experiments exclusively for Firebase only.

Requirements

Lion Remote configs must be installed and setup. You can install the

Lion - Remote Configspackage from the package manager. More info can be found here (only looking at the installation guide in the link will be enough)Ensure that you have the Firebase package configured and setup in your project. More info can be found here.

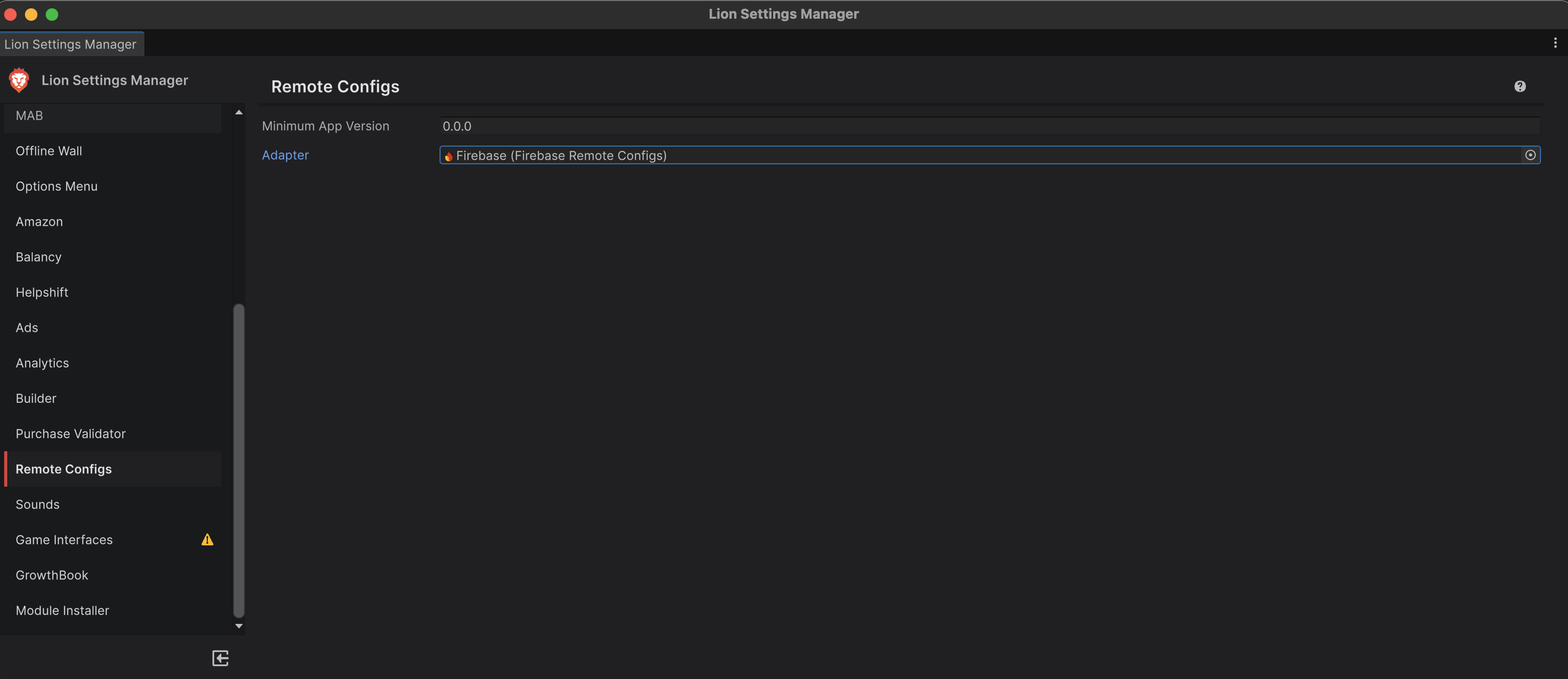

"Firebase" must be selected for the

Adapteroption in the "Remote Configs" settings. You can access this via the LionStudios/Settings Manager menu.

LionSDK will automatically fire the

ab_cohortevent to LionAnalytics if you follow the steps below:Every experiment needs a unique name to track which variant the player has been assigned for that experiment. LionSDK will automatically check for a parameter with the following naming convention and use that to fire the required

ab_cohortevent for you.

“

exp_[FULL EXPERIMENT NAME]" (replace[FULL EXPERIMENT NAME]with your unique name). Make sure to use the experiment naming convention described here for yourFull EXPERIMENT NAME. Take a look at our example setup below for more details.

Make sure the exp_[FULL EXPERIMENT NAME] is removed once the experiment is not running, concluded or deleted. If this parameter needs to be kept, then make sure to set its default value to just null or empty.

Example Experiment (AB Test) Use Case

Firebase Remote Config Experiment (AB Test) Example

Let's say the product team wants to run an experiment using Firebase Remote Config to optimise the level at which interstitial ads start showing in the game. The Product team wants to run an experiment using Firebase Remote Config on the game named:

King Wing(3 letter code:kwi) to evaluate the impact of different interstitial ad timings. The platform is Android (3-letter code:and). So the full experiment becomeskwi_and_NewInterTimer, with the following variants and configurations:Variantsinter_between_timeinter_start_timecontrol

90

90

aggressive

60

60

passive

120

120

Each variant/cohort of the experiment will receive a different value for the

inter_between_timeand theinter_start_timeparameter, allowing the team to treat users differently by assigning them different values.There will be 3 experiment variants:

“control”,“aggressive”, andpassive.Users will be equally distributed among the experiment variants.

Firebase Remote Config Setup

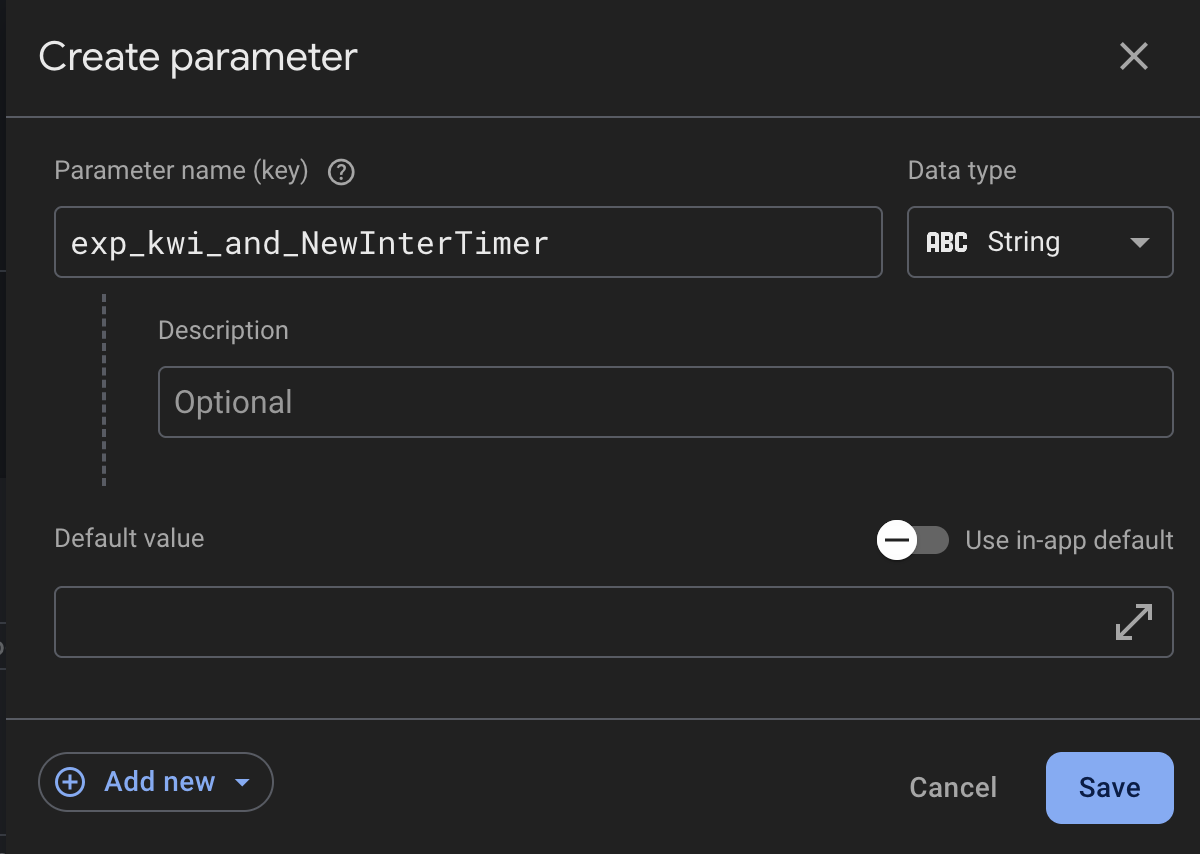

In the Firebase dashboard, go to Remote Config/Parameters and click

Add parameter. Add 3 new parameters calledinter_between_time,inter_start_time, andexp_kwi_and_NewInterTimer. These 3 parameters will be used in our experiment setup. A button to publish your new changes will appear, and select "Publish changes".The

exp_kwi_and_NewInterTimerThe parameter serves as an internal identifier to track which cohort variant a player belongs to. This parameter will be set to one of three values: control, aggressive, or passive. Keep the default value clear.

In the Firebase dashboard, go to Remote Config/AB Tests tab and click

Create Remote Config experiment. Create an experimentkwi_and_NewInterTimerin the Firebase Console. This experiment will be run on a game calledKing Wing(3 letter code kwi), for Android platforms. Note: You must use your own game code, platform and experiment name.

Next, setup your Targeting. You can select which platform (Android or iOS) you want your experiment to run on, as well as what percentage of your player base will be tested. Note: You must setup the same experiment separately for both iOS and Android. In our case, we will be using Android.

Setup the "Goals" section.

Setup the variants for this experiment.

Looking back at our initial guideline here, we want to have 3 groups. The

control,aggressiveandpassive.Each group will have different values for the 3 new parameters (

inter_between_time,inter_start_time,andexp_kwi_and_NewInterTimer) we created in Step 1.Create 3 variants and set the values for each of the parameters.

Make sure to name the

exp_kwi_and_NewInterTimerparameter to match the group it belongs to.

Click "Review", and now you can start the experiment when you are ready.

Go to

A/B Testing→Start Experiment

Once the experiment has concluded, you can apply the best-performing variant as the default value for all users.

Last updated

Was this helpful?